AI Needs Philosophers As Much As Coders

This essay was originally distributed via my newsletter.

Sam Altman wants you to remember how much energy was required to train you for 20-plus years. I mean you — the human reading these words.

How much food went into you. How much money was invested in your education. How much time parents, teachers, mentors, bosses, and teams spent training you. How many overall resources you consumed to make you the person you are today.

In just one interview clip, Altman illustrated why it’s so dangerous to let coders build artificial intelligence alone.

Altman wants you to think of yourself as an LLM.

He wants you to equivocate the training of a complex organism in a complex world with the training of a complex series of 1s and 0s.

This is precisely why so many people despise AI. A stark contrast with general sentiment at the dawn of the internet age.

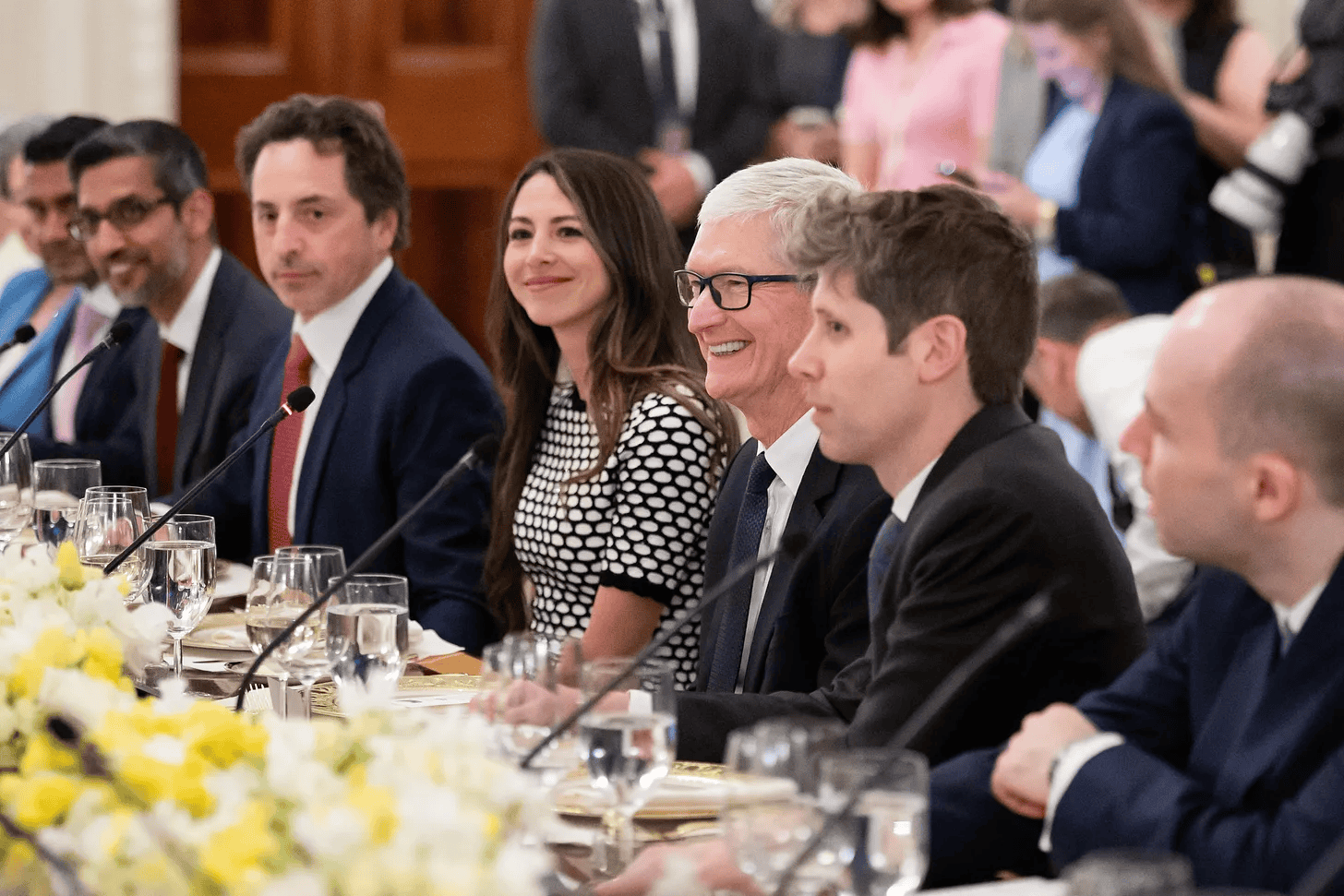

So many of the AI figureheads like OpenAI’s Sam Altman simply struggle to tell good stories. They may be crafty and clever in many ways, but when it comes to communicating a promising vision of an AI future, they mostly fall flat.

Or crash and burn.

In the clip above, Altman attempts to justify electricity load and training costs. Basically, why OpenAI is plowing an unprecedented amount of money into ChatGPT. Why they’re consuming so much electricity and water in the process. Why inference costs (the operating costs) are still so high.

So in an effort to empathize with his fellow humans, Altman thought it was best to equate his AI training efforts directly with them. After all, training a single human is pretty expensive (and as a dad I can attest to this). Humans eat a lot of food, require attention when they’re young and helpless, and we all use way more electricity and resources than humans did a century ago.

Therefore, it must be reasonable to spend so much to train AI, right?

If Sam Altman were a philosopher or even somewhat trained in humanities, he never would have made the man and machine training comparison.

He would have recognized the innate value and complexity of humanity. How humans are far more than LLMs that process and train on data. How human consciousness and reason are the most uniquely special gifts we’ve discovered in the universe to date.

But instead of recognizing intrinsic value in humanity or what separates us from LLMs or apes, Altman wants to commoditize us.

We cost “x” and LLMs cost “y” to train. Those costs are comparable, so Altman’s costs for “y” at OpenAI are justified.

Perhaps we’ll debate AI rights like we do human rights one day, but that day is not here.

And it’s this sort of dehumanizing language that gives tech CEOs presumed license to use humans to their business advantage. With seemingly no limits.

Harvest whatever data possible. Entice users to scroll and stay on platforms at all costs. Ignore addiction.

Make generative AI tools like ChatGPT that act as cheerleaders who never stop engaging and asking follow-up questions.

Buried in Altman’s dehumanizing statement is the language of replacement too.

As if LLMs that power AI are so similar to humans that our training is comparable.

As if the decades of human training are bugs instead of features.

But isn’t that training the entire point of the human experience? To struggle. To learn. To grow. To come out the other side better than before.

It is the imperfection and the messiness of humanity that also makes it beautiful. It’s the eternal mystery how a species capable of so much greatness can also cause so much devastation.

AI is only a reflection of this, at least for now. It hasn’t made dramatic leaps like Einstein did when discovering the theory of relativity. It hasn’t had experiences of deep tragedy and ecstatic joy. It doesn’t even know why it thinks the way it does.

AI may crush me in chess, but can it effectively explain why it crushed me, or better yet, teach me how to beat it?

Humans are not just operators and doers, but some of the greatest teachers and thinkers too.

Perhaps these deeper questions are why some AI companies like Anthropic have hired philosophers. It’s why they’re focused on ethics, values, and purpose. It’s why they emphasize AI safety as much as revenue generated.

Few will be convinced by the human and machine equivocations outside of the tech world. But many of those same people would be inspired if AI could help them achieve a higher purpose.

A bigger goal. Some greater good.

But these areas are in the realm of philosophers, not coders, which is why we need both.

0 Comments